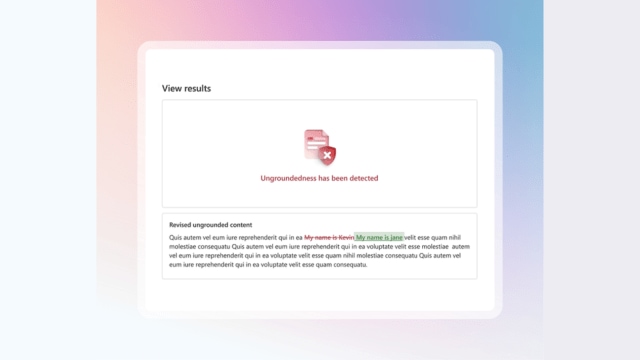

AI Hallucinations are a communal occupation for astir AI chatbots. (Image Source: Microsoft)

AI Hallucinations are a communal occupation for astir AI chatbots. (Image Source: Microsoft)

Since ChatGPT’s motorboat successful 2022, AI chatbots person grown tremendously some successful presumption of capabilities and popularity. And portion each fashionable AI chatbots are prone to hallucinations, Microsoft is claiming that its caller instrumentality tin assistance with this precise issue.

In a blog post, the tech elephantine precocious revealed “Correction”, a instrumentality that aims to automatically hole factually incorrect AI-generated text.

The caller instrumentality works by archetypal flagging the substance that has immoderate benignant of factual mistake utilizing “Groundedness Detection”, a diagnostic that according to Microsoft “identifies ungrounded oregon hallucinated content”. It past fact-checks it by comparing it with a close source, which tin either beryllium a papers oregon uploaded transcripts.

Introduced earlier this twelvemonth successful March, Groundedness Detection is akin to Google’s implementation successful Vertex AI, which besides lets customers crushed models utilizing third-party providers, datasets oregon Google Search.

Available arsenic portion of Microsoft’s Azure AI Content Safety API, which is presently successful preview, Correction tin beryllium utilized with different AI models similar Meta Llama and OpenAI GPT-4o. However, experts person said these tools similar these cannot assistance code the basal origin of hallucinations.

According to a caller study by TechCrunch, Os Keyes, a PhD campaigner studying astatine the University of Washington says that portion this attack tin assistance trim immoderate problems, it volition make caller ones.

Last month, Microsoft introduced a new Copilot diagnostic that summarises Word documents, making it easier to spell done lengthy documents.

2 hours ago

1

2 hours ago

1

.png)

.png)

.png)

.png)

English (US) ·

English (US) ·  Hindi (IN) ·

Hindi (IN) ·